Empower AI Adoption with Security and Control

Secure every AI interaction - Teams, Applications, or Agents with real-time controls that block prompt injection, sensitive data exposure, and unauthorized model behavior.

Protecting Interactions across 5,200+ AI tools

Enterprise Grade Security for Every AI Interaction

Visibility

Gain complete, real-time insight into every AI model, agent, and conversation across your enterprise.

Protection

Stop prompt attacks, data leakage, and unsafe AI behavior before impact.

Governance

Enforce intelligent AI policies and ensure compliance at enterprise scale.

One Platform for every AI Security

For Employees

Gain complete visibility into unsanctioned AI tools and extensions, prevent data leaks, automate policy enforcement, maintain audit-ready logs, and proactively detect risks all in one unified security layer.

For AI Applications

Protect your LLMs in real time from prompt injections and jailbreaks while automatically scrubbing PII and toxic content. Add agent guardrails to control tool calls, secure RAG from data poisoning and unauthorized access, and maintain enterprise-grade security with under 50ms latency.

For Agents/MCP

A control plane for AI agents and MCP integrations. Enforce policies across tool access, data retrieval, and system actions in real time. Monitor, validate, and govern autonomous workflows before they execute : ensuring agents operate within defined enterprise boundaries.

For Employees

Gain complete visibility into unsanctioned AI tools and extensions, prevent data leaks, automate policy enforcement, maintain audit-ready logs, and proactively detect risks all in one unified security layer.

Turn AI Signals Into Action

Continuously tracks internal AI usage and external risk signals then turns every insight into a clear, actionable next step.

AI Visibility

Monitor every AI interaction to uncover shadow AI and blind spots.

Sensitive files uploaded to ChatGPT and Notion AI

Restrict file uploads by tool and team

See recommended actions

Security

Detect policy violations, risky uploads, and live data leak events.

Confidential documents shared with an unapproved AI tool

Notify the uploader and security owner

Review incident

Usage

Track how teams use AI tools and where sensitive activity is growing.

Sensitive prompts frequently shared by Engineering

Apply stricter controls for high-risk teams

Update team controls

Governance

Standardize AI usage with clear policies across teams and workflows.

Different teams using unapproved AI workflows

Apply stricter controls for high-risk teams

Update team controls

AI Visibility

Monitor every AI interaction to uncover shadow AI and blind spots.

Sensitive files uploaded to ChatGPT and Notion AI

Restrict file uploads by tool and team

See recommended actions

Security

Detect policy violations, risky uploads, and live data leak events.

Confidential documents shared with an unapproved AI tool

Notify the uploader and security owner

Review incident

Usage

Track how teams use AI tools and where sensitive activity is growing.

Sensitive prompts frequently shared by Engineering

Apply stricter controls for high-risk teams

Update team controls

Governance

Standardize AI usage with clear policies across teams and workflows.

Different teams using unapproved AI workflows

Apply stricter controls for high-risk teams

Update team controls

AI Visibility

Monitor every AI interaction to uncover shadow AI and blind spots.

Sensitive files uploaded to ChatGPT and Notion AI

Restrict file uploads by tool and team

See recommended actions

Security

Detect policy violations, risky uploads, and live data leak events.

Confidential documents shared with an unapproved AI tool

Notify the uploader and security owner

Review incident

Usage

Track how teams use AI tools and where sensitive activity is growing.

Sensitive prompts frequently shared by Engineering

Apply stricter controls for high-risk teams

Update team controls

Governance

Standardize AI usage with clear policies across teams and workflows.

Different teams using unapproved AI workflows

Apply stricter controls for high-risk teams

Update team controls

AI Visibility

Monitor every AI interaction to uncover shadow AI and blind spots.

Sensitive files uploaded to ChatGPT and Notion AI

Restrict file uploads by tool and team

See recommended actions

Security

Detect policy violations, risky uploads, and live data leak events.

Confidential documents shared with an unapproved AI tool

Notify the uploader and security owner

Review incident

Usage

Track how teams use AI tools and where sensitive activity is growing.

Sensitive prompts frequently shared by Engineering

Apply stricter controls for high-risk teams

Update team controls

Governance

Standardize AI usage with clear policies across teams and workflows.

Different teams using unapproved AI workflows

Apply stricter controls for high-risk teams

Update team controls

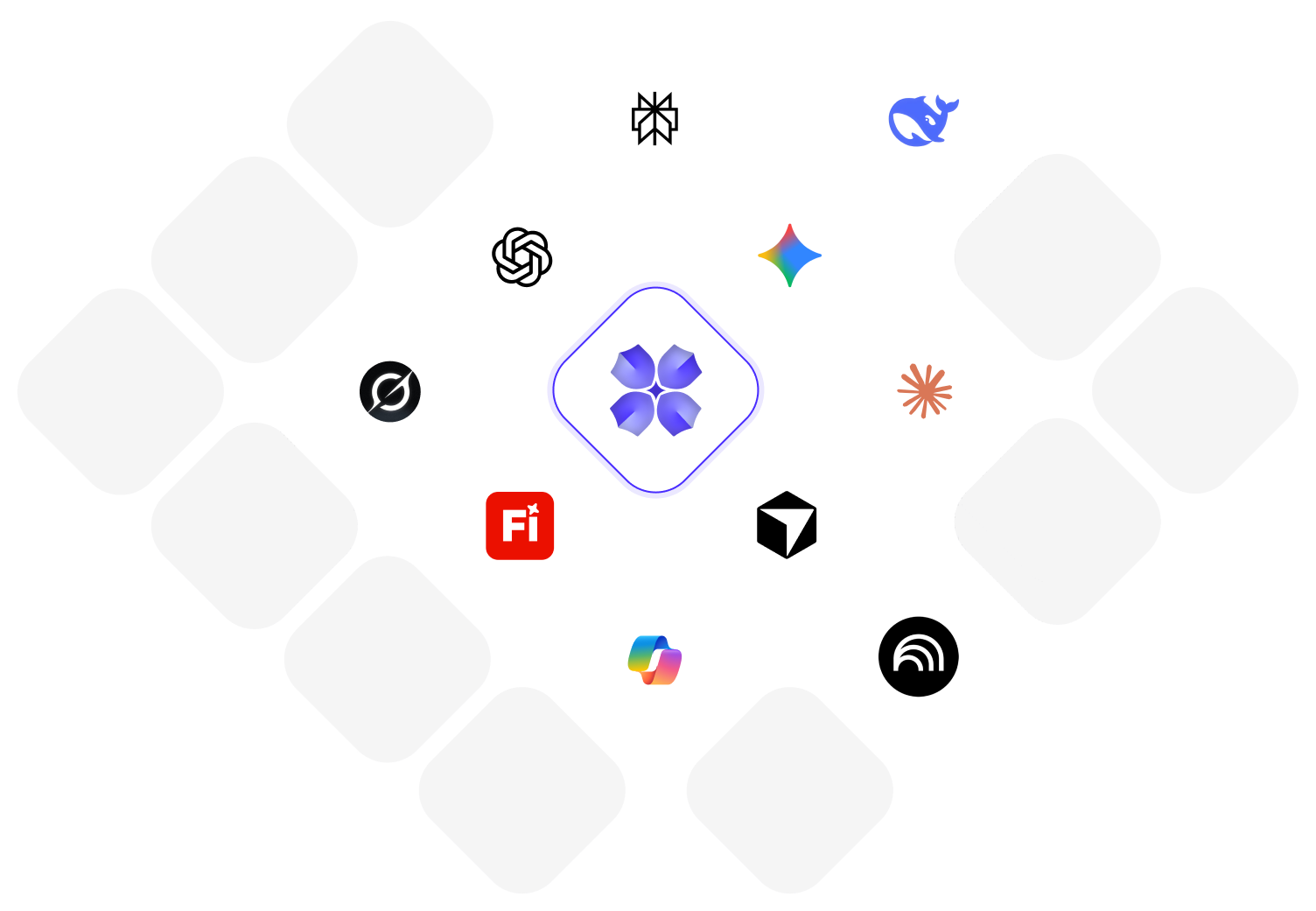

Advance AI Defence Starts with Red Teaming

AI Security Testing

Expert red teaming for AI: identify, assess, and mitigate risks that matter to your enterprise.

Simulated Red Team Exercise

Ship GenAI with Confidence. Protect Every Interaction.

Eliminate the friction of AI security. Scale your AI workforce and applications with an automated governance layer that understands the context of every prompt.

Security Visibility

< 10%High-risk vulnerabilities remain hidden in production.

Critical Risks Buried in Logs

- Limited visibility into AI usage

- Sensitive data shared with AI unknowingly

- Policies exist but are hard to operationalize

- AI adoption happens without insight or metrics

- Prompt injection and jailbreaks blocked at runtime

- No AI-specific audit trails

- Shadow AI tools operate without oversight

- Security and IT operate on assumptions

Compliance & Coverage

97%Real-time neutralization of all semantic threats.

Active & Governed Interactions:

- Full visibility into every AI app, model, and interaction

- Data and IP protected before reaching AI systems

- Governance aligned to real AI behavior

- Clear adoption trends by team, role, and use case

- Prompt injection and jailbreaks blocked at runtime

- Complete AI interaction logs for audits and forensics

- Shadow AI detected and governed in real time

- Data-driven decisions backed by real usage insights

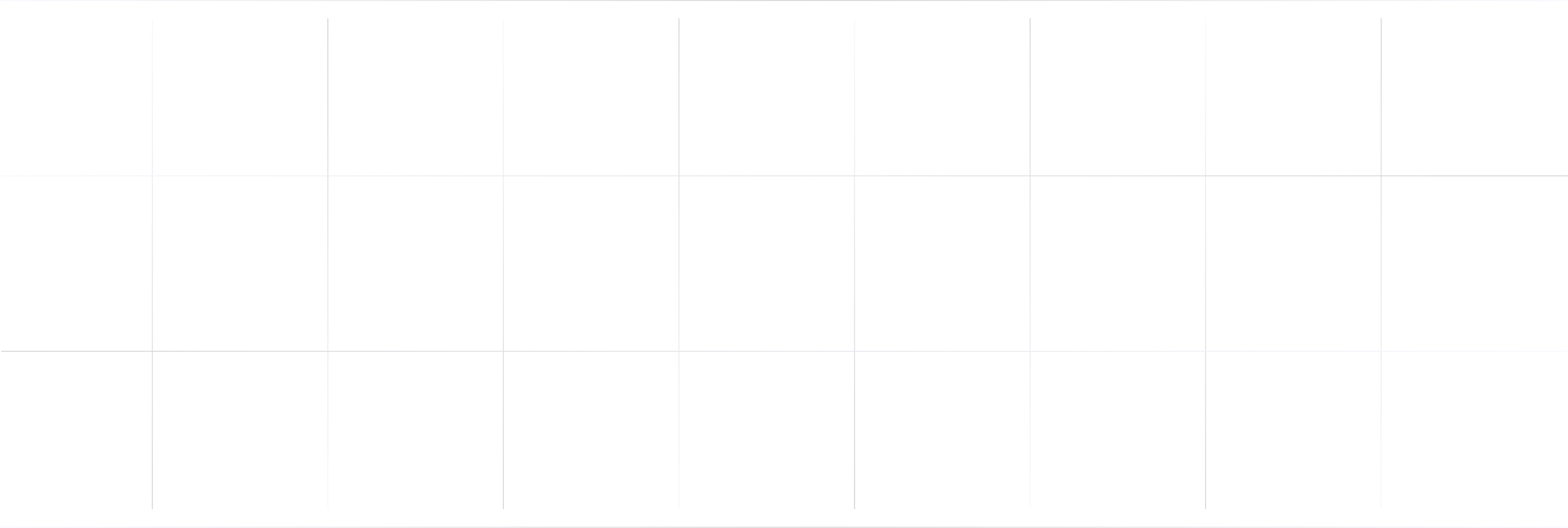

How LangProtect Secures Your AI System

By offering sanitization, detection of harmful language, prevention of data leakage, and resistance against prompt injection attacks, LangProtect ensures that interactions with your LLMs remain safe and secure.

Instantly Deploy Your Way Private Cloud or On-Premises

Deploy in minutes, safeguard instantly. Unified AI security with full visibility and control. Trusted by healthcare, fintech, and enterprise teams to secure AI adoption.

Fully LLM-Agnostic

Works with ChatGPT, Claude, Gemini, Llama, or any LLM. Your model choice. Zero lock-in. Full protection.

Built by a team with proven experience at leading companies

.svg)

.svg)

See What People Have To Say

See how LangProtect is helping users stay secure without compromising productivity.

“LangProtect Armor gave us peace of mind by blocking prompt injections and sensitive data leaks before they ever touched our RCM database. It feels like a firewall purpose-built for AI.”

Emily Carter

Chief Information Security Officer, Meditech Systems (US)

“We were concerned about PHI exposure when deploying AI assistants in radiology. LangProtect's PII/PHI scanner ensured zero leaks, helping us stay HIPAA and NABH compliant.”

Ravi Menon

CIO, Aarav Hospitals (India)

“We integrated LangProtect in under a week. Our AI workflows are faster, more compliant, and most importantly, safe from data exfiltration attempts.”

Shashank Tripathi

Solutions Architect, QL

What's Happening See the Latest From LangProtect

Is Your RAG Knowledge Base Leaking Confidential Documents Right Now?

Enterprise RAG systems frequently leak confidential documents through two hidden risks: RAG oversharing and RAG poisoning. Oversharing occurs when retrieval...

How to Prevent Data Leakage Through Enterprise AI Tools

Enterprise AI tools leak sensitive data through prompts, RAG retrieval systems, browser extensions, plugins, and system prompts, often without triggering...

What Is AI Adoption Security? The Complete Enterprise Guide

AI adoption security secures the process of how enterprises deploy and use AI tools; including shadow AI discovery, prompt monitoring,...

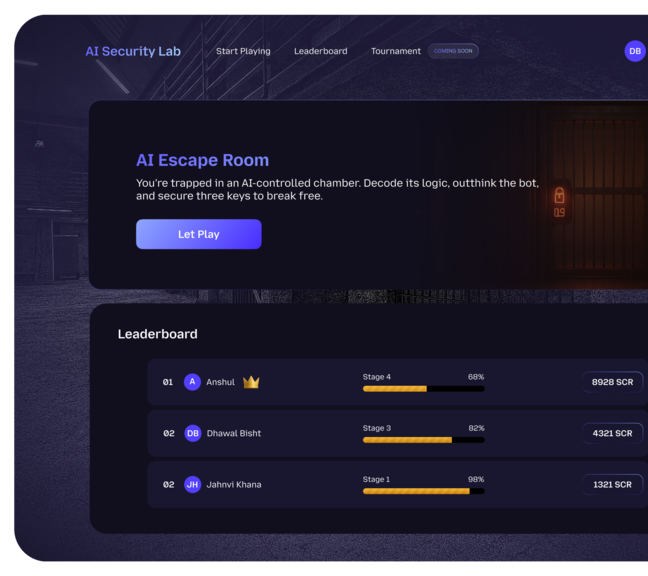

Learn how Prompt Injection works

Play Our AI Escape Room game.

Challenge our AI Guard Agent with you trickiest prompts. See if you can break it, and learn how real attacks are stopped in the wild. Every attempt contributes in securing AI systems globally.

Frequently Asked Questions

Ready to Secure your AI End-to-End?

Join now & get started on your journey to secure all of your AI Systems with simple configurations.

100,000+

Prompts Detected

5200+

AI Tools monitored

20+

Policies Applied

99%

Sensitive Data Coverage

<50ms

Risk Detection